Instagram now demotes vaguely ‘inappropriate’ content

Instagram is home to plenty of scantily clad models and edgy memes that may start to get fewer views starting today. Now Instagram says, “We have begun reducing the spread of posts that are inappropriate but do not go against Instagram’s Community Guidelines.” That means if a post is sexually suggestive, but doesn’t depict a sex act or nudity, it could still get demoted. Similarly, if a meme doesn’t constitute hate speech or harassment, but is considered in bad taste, lewd, violent or hurtful, it could get fewer views.

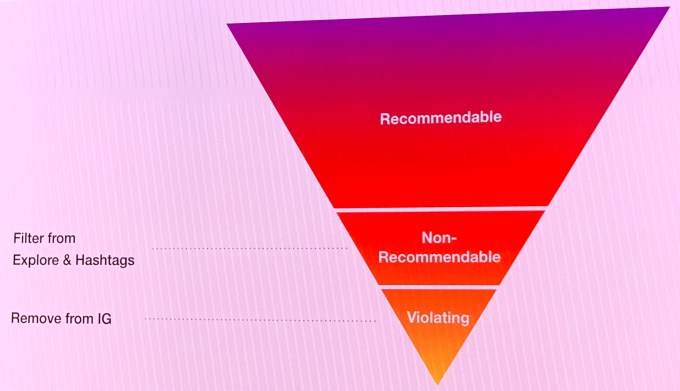

Specifically, Instagram says, “this type of content may not appear for the broader community in Explore or hashtag pages,” which could severely hurt the ability of creators to gain new followers. The news came amidst a flood of “Integrity” announcements from Facebook to safeguard its family of apps revealed today at a press event at the company’s Menlo Park headquarters.

“We’ve started to use machine learning to determine if the actual media posted is eligible to be recommended to our community,” Instagram’s product lead for Discovery, Will Ruben, said. Instagram is now training its content moderators to label borderline content when they’re hunting down policy violations, and Instagram then uses those labels to train an algorithm to identify.

These posts won’t be fully removed from the feed, and Instagram tells me for now the new policy won’t impact Instagram’s feed or Stories bar. But Facebook CEO Mark Zuckerberg’s November manifesto described the need to broadly reduce the reach of this “borderline content,” which on Facebook would mean being shown lower in News Feed. That policy could easily be expanded to Instagram in the future. That would likely reduce the ability of creators to reach their existing fans, which can impact their ability to monetize through sponsored posts or direct traffic to ways they make money like Patreon.

Facebook’s Henry Silverman explained that, “As content gets closer and closer to the line of our Community Standards at which point we’d remove it, it actually gets more and more engagement. It’s not something unique to Facebook but inherent in human nature.” The borderline content policy aims to counteract this incentive to toe the policy line. Just because something is allowed on one of our apps doesn’t mean it should show up at the top of News Feed or that it should be recommended or that it should be able to be advertised,” said Facebook’s head of News Feed Integrity, Tessa Lyons.

This all makes sense when it comes to clickbait, false news and harassment, which no one wants on Facebook or Instagram. But when it comes to sexualized but not explicit content that has long been uninhibited and in fact popular on Instagram, or memes or jokes that might offend some people despite not being abusive, this is a significant step up of censorship by Facebook and Instagram.

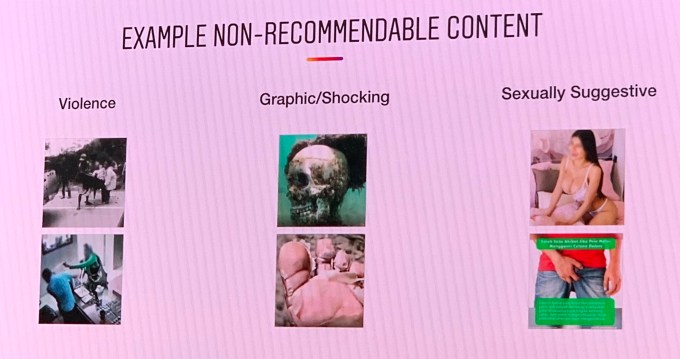

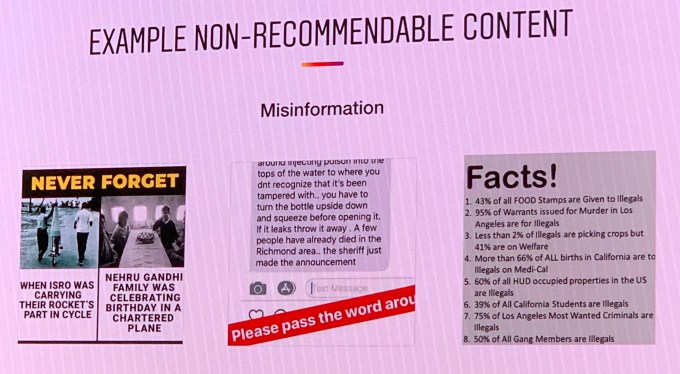

Creators currently have no guidelines about what constitutes borderline content — there’s nothing in Instagram’s rules or terms of service that even mention non-recommendable content or what qualifies. The only information Instagram has provided was what it shared at today’s event. The company specified that violent, graphic/shocking, sexually suggestive, misinformation and spam content can be deemed “non-recommendable” and therefore won’t appear on Explore or hashtag pages.

[Update: After we published, Instagram posted to its Help Center a brief note about its borderline content policy, but with no visual examples, mentions of impacted categories other than sexually suggestive content, or indications of what qualifies content as “inappropriate.” So officially, it’s still leaving users in the dark.]

Instagram denied an account from a creator claiming that the app reduced their feed and Stories reach after one of their posts that actually violates the content policy taken down.

One female creator with around a half-million followers likened receiving a two-week demotion that massively reduced their content’s reach to Instagram defecating on them. “It just makes it like, ‘Hey, how about we just show your photo to like 3 of your followers? Is that good for you? . . . I know this sounds kind of tin-foil hatty but . . . when you get a post taken down or a story, you can set a timer on your phone for two weeks to the godd*mn f*cking minute and when that timer goes off you’ll see an immediate change in your engagement. They put you back on the Explore page and you start getting followers.”

As you can see, creators are pretty passionate about Instagram demoting their reach. Instagram’s Will Ruben said regarding the feed/Stories reach reduction: No, that’s not happening. We distinguish between feed and surfaces where you’ve taken the choice to follow somebody, and Explore and hashtag pages where Instagram is recommending content to people.”

The questions now are whether borderline content demotions are ever extended to Instagram’s feed and Stories, and how content is classified as recommendable, non-recommendable or violating. With artificial intelligence involved, this could turn into another situation where Facebook is seen as shirking its responsibilities in favor of algorithmic efficiency — but this time in removing or demoting too much content rather than too little.

Given the lack of clear policies to point to, the subjective nature of deciding what’s offensive but not abusive, Instagram’s 1 billion user scale and its nine years of allowing this content, there are sure to be complaints and debates about fair and consistent enforcement.

Contributer : Social – TechCrunch

Reviewed by mimisabreena

on

Thursday, April 11, 2019

Rating:

Reviewed by mimisabreena

on

Thursday, April 11, 2019

Rating:

No comments:

Post a Comment